The Category Error, Part 1: Why gender explains less than we think

The first in a multi-part series tackling the logical fallacy at the heart of our gender debates, and offering a proposal for moving forward

This essay is Part 1 in a series examining the enduring traps of gender distinctions. Rather than arguing for or against any particular definition, the series asks a more basic question: does our continued reliance on gender as an explanatory model provide clarity — or does it obscure a truer view of ourselves and our society? You can find Part 2: Life before breadwinners.

I brace myself when I see a Substack post on motherhood. Most end up arguing a personal view on how women should be. Because to talk about mothers, after all, means talking about gender.

Through my kids, I know what it is to have your heart go walking around outside your body. Even still, I don’t recognize myself in these posts. I never wanted to breastfeed. Too much touch feels overwhelming. I didn’t want a year of maternity leave; returning to work felt restorative. I’m not naturally suited to the daily work of childcare. I’m a lawyer, and my job is far easier than our nanny’s — at least to me.

But this essay isn’t about my personal perspective or contemporary debates. Instead, it steps back to ask a more basic historical and philosophical question: what work the category of gender has been asked to do, and why public discussions among women, about women, so often feel unsatisfying and incomplete.

The authors of these posts are incredibly different from each other; women are a varied bunch. By treating them as a singular group, they — and we — are making a category error.

The idea that being a woman means that, at some essential level, we feel, think, desire, and burn out in the same ways and in contrast with men collapses under the lightest scrutiny — just check reactions in the comments. Yet it remains the unspoken premise of our contemporary discourse.

Before we dig in: If you like what you read, please take a second to show it! Click like, comment, restack it (with a note!)—that breaks through the algorithm and helps people find my writing.

Organizing ourselves by gender is an ancient practice. Indeed, gender has not just survived over millennia, but flourished as our go-to model for explaining human behavior, despite forceful efforts by thinkers like Judith Butler and Simone de Beauvoir to dislodge it.

By gender, I mean the social category a person is placed in based on perceived biological sex. It is sex-based in origin but social in operation: a classification system that assigns expectations, roles, and meanings, expanding far beyond the reproductive imperatives of biological sex.

Once an individual is placed into such a system — and we all are — a reaction happens. The classification may fit well enough, producing a sense of ease or coherence. Or it may chafe, like wearing ill-fitting shoes. It is here, at the point of collision between person and category, that questions of identity arise. We may embrace the category we are assigned, resist it, reinterpret it, or orient ourselves toward another altogether.

Gender, in this sense, floats over sex like a balloon: tethered, but loosely.

Mapping opposite qualities onto man and woman goes back eons: Adam and Eve; yin and yang; mother earth and father sky. Their durability has lent gender distinctions an aura of inevitability and profundity, encouraging the sense that human nature is fundamentally gendered.

But this isn’t just a matter of cultural inheritance. Our attraction to such models reflects something deeper about how the human mind works.

As humans, we’re drawn to models that put people into categories: the simpler, the better.

We love compression models. Human cognition is optimized for speed and efficiency, not accuracy. Rather than evaluate each situation or person on the full set of available facts, we rely on cognitive shortcuts: rules of thumb that allow us to assess quickly and move on.

These shortcuts are all around us. Astrology, which is enjoying a resurgence, offers a colorful example. Faced with a difficult decision — take the job, start the business — you could carefully weigh every last variable. Or you could consult your horoscope.

Religion works similarly, supplying a preloaded decision tree. You obey your parents not because they are right in every instance, but because a commandment establishes the hierarchy. You oppose gay marriage not because you’ve examined the issue afresh, but because a religious authority instructed you to. There is deep comfort in outsourcing judgment, especially to models that promise clarity by dividing a complicated and fractious world into right and wrong, good and bad.

Other compression models operate without our knowing it. Decades of research on implicit bias show that we are primed to explain human behavior through categories like race. The actual causes of our decisions frequently remain opaque to us; the brain supplies post-hoc justifications that create the illusion of deliberate choice.

This has been the consistent finding of split-brain research, in which a command to do something (like laugh, or draw a banana) is given to just one side of the brain. The person complies, laughing or drawing a banana, and the brain’s other side is asked why they did it. Rather than answer “I don’t know” it creates an entirely fictitious reason for doing what the person did, believing it to be true: I laughed because you guys are so funny! Rather than admit we lack access to the reasons for our behavior, our brain convinces us we’re fully aware and in control.

We also cling to compression models long after their flaws are apparent. Across disciplines, this truth emerges. Psychologists Daniel Kahneman and Amos Tversky famously demonstrated that humans are not rational actors, but systematic users of biased heuristics. Cognitive neuroscience arrived at a parallel conclusion. Karl Friston and Andy Clark have shown that the brain functions as a prediction engine, seeking to minimize surprise by developing early, coarse-grained models that harden in place.

Most relevant to this essay is work in developmental psychology on essentialism bias. Susan A. Gelman has shown that humans instinctively treat certain categories as reflecting deep, underlying essences, and that we do not treat all categories equally. When explaining a person’s behavior, we privilege categories like gender and race over innumerable alternatives: profession, education, birth cohort, or personal history.

Jean-Paul Sartre once described a woman who claimed her dislike of Jewish people stemmed from a bad experience with Jewish furriers. Why was the lesson to distrust Jews, Sartre asked, rather than furriers? Gelman’s research helps explain why certain categories serve as explanatory magnets.

It also helps explain why Sartre — and the rest of us — often default to gender when referring to an unnamed individual. Rather than profession, nationality, age, or simply the neutral label “person,” we most readily identify people by gender. That’s the descriptor we consider most illuminating. In Sartre’s anecdote, it was specifically a “woman” who distrusted Jews.

Developmental research shows that this tendency arises early. As The Social Brain: A Developmental Perspective puts it:

A number of laboratories have found that for the category of gender, essentialism emerges early and in robust fashion across a range of societies, whereas for other categories, including race, ethnicity, nationality, socioeconomic status, or team membership, essentialism arises inconsistently, and often only at a later age if at all. It is notable that gender and race are treated differently from one another, given that both have visible correlates that children detect from an early age.

Essentialist stereotypes are easily acquired, the research indicates. But as Kahneman, Clark, and others have shown, once in place, they are dislodged only with great difficulty.

It’s no surprise then, that gender, sitting at the top of our explanatory hierarchy, has accumulated more and more explanatory freight over time.

But you might be surprised by how, at various points in history, the strong gale of gender stereotyping has changed direction.

Take friendship. Aristotle believed that only men were capable of it. Women lacked the necessary rationality and individual agency to form friendships. This stereotype lived on through the Renaissance, when Michel de Montaigne wrote his famous essay “On Friendship.” Montaigne wanted his readers to know that none of the essay’s beautiful passages applied to women, because they were incapable of “this sacred bond.” It wasn’t until the 19th century that the stereotype began to reverse. Today, women are widely supposed to be better at friendship, based in turn on the stereotype that they are more socially and emotionally attuned (the reverse of what Aristotle and Montaigne believed). Men — heterosexual men, is the unstated qualifier — are thought to be relationally challenged and dependent on women (wives, girlfriends) for emotional connection. Aristotle and Montaigne would find our modern thinking incoherent.1

Or, take morality. Women used to be considered fundamentally immoral. Plato described earth as a moral proving ground for men and those who failed life’s morality test were punished by being sent back to earth as women. Women, under this view, were morally defective men. Today, women are more often cast as the moral spine of society — more ethical, more responsible, and more self-regulating than men.2

Relatedly: sexual urges. In Europe, the belief that women were more lustful and unfaithful than men persisted for centuries. Witchcraft manuals like Malleus Maleficarum claimed that women were more sexually driven: “All witchcraft comes from carnal lust, which is in women insatiable.” Society wrung its hands over the problem of women’s insatiable lust. Yet by the Victorian era, elite European societies increasingly believed the opposite. Women were accepted (and thus expected) to be naturally uninterested in sex. Men, by contrast, needed to “sow their wild oats.” Today, women are often considered more naturally monogamous, while men are excused as naturally wayward — a near inversion of the medieval view.

How about fashion? In early modern Europe — especially France — elite male fashion was far more elaborate than female fashion. That flipped in the late 18th and early 19th century in what historians call the “Great Male Renunciation,”3 after which ornament, color, and display were deemed to be frivolous and became coded as feminine, while sober apparel was coded masculine.

It’s thought today that wanting to wear a dress or skirt is an inherently feminine impulse, but there’s no logic to this. Pants are a recent convention. From the classical Greek and Roman perspective, pants were worn by tribal peoples (both men and women, particularly on horseback) and thus deemed barbaric. In western culture, pants as exclusively masculine attire didn’t harden until the late 18th and early 19th century.

It’s almost as though any human quality must be set up as a diametric and then divided by gender. If men are strong, then women must be weak. If women are nurturing, then men must be neglecting. If men are ambitious, then women must be unassuming. If men desire power, then women must desire subordination. The way the qualities are divided isn’t as important; the vital thing is that a divide is made.

Oddly, the two-party political system in the US works the same way. Once a cause is linked to a party, the anti-cause is linked to the other. This is such a well-grooved track that people worry that Republicans’ pro-natalism will produce anti-family positions on the left. We see how irrational this is, yet politicians and the politically-active fall in line. Habitual polarization is bad enough in politics; when used to define the nature and limits of individual lives, it’s destructive.

Anthropologists have documented this dynamic in other cultural contexts as well. In the 1930s, Gregory Bateson described how groups come to define themselves through opposition, amplifying differences that began as minor or contingent. Once contrast becomes identity, behavior follows. If a society organizes itself by gender, the performance of aggression by men may be countered with a performance of meekness by women — not because either is essential, but because each becomes defined against the other. Over time, the contrast hardens.

These examples offer a clear caution: don’t mistake society’s gender mappings for inherent truth. They are contingent constructions.

Once you begin to appreciate the inconstancy of gender conventions, this next assertion should not be surprising: There have always been people who did not conform to gendered expectations — and societies have always had to figure out what to do with them.

What’s interesting is how nonconformity has been handled at a structural level.

Three strategies recur: expanding the gender categories, moralizing deviation, and pathologizing difference. Together, they illuminate today’s gender wars, ranging from how families should be constituted and distribute labor, what forms of gender discrimination are acceptable, what kinds of relationships should be legally recognized, what we should tell our children about gender, and more.

Expanding the gender categories

A popular talking point is that there are only two genders, and that claims to the contrary represent a radical, hyper-modern break from tradition. That’s wrong. Across cultures and centuries, humans have repeatedly created additional gender categories for people who did not fit the man-woman binary.

In traditional Hawaiian and wider Polynesian societies, the māhū were people typically assigned male at birth who occupied a distinct social category associated with women’s roles, labor, and forms of social life. They were not simply treated as women, nor as men, but as something else — a recognized category with hybrid characteristics. What’s striking is how widespread this category was across Polynesian cultures.

Rather than arguing over whether a male-assigned person “really” counted as a man or a woman, these societies abandoned the strict binary itself. The binary remained useful, but it was not treated as exhaustive.

European observers who encountered the māhū in the late 18th century reacted with fascination and revulsion. A British naval officer writing of Tahiti noted:

They have a set of men called māhū. These men are in some respects like the eunuchs of India but they are not castrated. They never cohabit with women but live as they do. They pick their beards out and dress as women, dance and sing with them and are as effeminate in their voice. They are generally excellent hands at making and painting of cloth, making mats and every other woman’s employment.

Similar solutions appeared elsewhere: the hijras of South Asia, the Navajo nádleehi, and the Lakota winkte. In each case, societies explicitly made room for exceptions to the man-woman binary.

For these groups, the two-gender model functioned as a tool, not a dictate.

Moralizing deviation

Christian Europe took a different approach to gender nonconformity. Conformance with gender expectations was treated as a moral and religious matter. To enforce the moral and religious authority of Christian institutions, nonconformance would not be tolerated.

There were various tools for enforcing the two-gender binary and its dictates. Consider witchhunts. Witches were usually unmarried women who were economically independent, intellectually curious, and sexually irregular. They failed to conform with gender rules, and their failure to conform was the very evidence that condemned them.

Thus, unlike the cultures above, which defended the essential validity of gender categories by allowing them to bend and expand, European Christian societies demanded that people adhere to the man-woman model — or else.

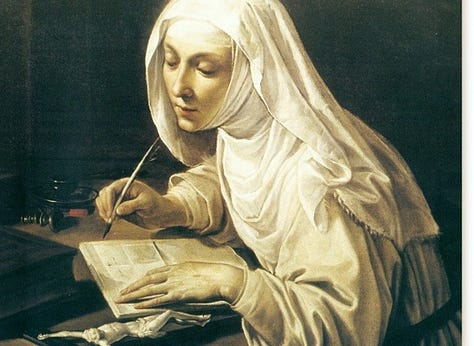

Or so it appears. But look closer and you’ll see that Christian Europe also made room for exceptions. Not in terms of labeling, but by authorizing a non-conforming life path.

If a woman remained unmarried and childfree in medieval Europe, she was seen as socially suspect and morally unstable, a potential witch even… unless she married Christ. In that case, her childfree status became a sign of religious devotion.

Convents permitted women to be childfree without censure. They could also pursue education, create art, take leadership roles in their community, and engage in countless other activities that were discouraged or forbidden for secular women.

For men, religious orders offered an alternative to the masculine script of the era. Men were expected to marry, reproduce legitimately, defend land and honor through violence, and perform social obligation. For some men — especially elite younger sons — this included military service in Europe’s frequent wars. A man who had a nonviolent nature, did not desire marriage, or simply wanted a quiet life outside society, found refuge in a monastery.

Convent life was not paradise. But as paradoxically as this seems to us today, for many women and men, a convent afforded a measure of freedom and acceptance. They offered a gendered exit ramp.

This is not just a modern gloss on history; people actually made this calculus. Take Sor Juana Inés de la Cruz.

Born illegitimate to an indigenous woman and a European soldier in Mexico, then the colony of New Spain, she possessed a Leonardo-like hunger to master the world through understanding.

She knew that to live how she wanted, she’d need to game the rules. As a child, she even proposed dressing as a boy to attend university. Her mother laughed. But she honed her strategy. She saw that to pursue a life of study and creativity — free from the interruptions of family obligation — she must become a nun.

Inés de la Cruz is an extraordinary case. As a nun, she wrote poetry and plays that her supporters published across the ocean in Spain to great acclaim. Like Sappho before her, she became known as the “Tenth Muse.” Most of us are not an Inés de la Cruz, but it would be wrong to conclude that she was wholly unique. Other nuns were reading, writing, composing, and painting but in most cases their pursuits — like they themselves — remained cloistered behind walls.

Of course, religious orders weren’t free-for-alls. Nuns and monks traded the secular gender conventions for a different set of conventions. But something was gained. Taking orders unlocked a realm beyond the prevailing dictates of man and woman. They offered socially acceptable channels for gender nonconformity: a kind of life-path exemption.

Pathologizing difference

Though western societies gradually broke free from the church’s authority, they were still conditioned by its dictates. The gender binary, on which every aspect of society had been built, was not to be discarded. For medical, legal, and social authorities, its validity was taken for granted. But lacking the church’s prerogatives of faith, they needed a new rationale to defend traditional gender categories.

A solution emerged: treat deviations as defects of the individual. Pathologize them. In the 19th and early 20th centuries, females who did not desire motherhood were diagnosed as disordered. Males who desired men were classified as diseased. Female aggression was medicalized as hysteria.

Pathology replaced sin as the regulatory model, offering a secular mechanism for enforcing the same boundaries.

The new system works on multiple levels. First and most effectively, disease carries stigma. To avoid the shame of disease, you mask your “symptoms.” Men cross-dress in private. Women stifle anger under forced smiles. Before any explicit intervention, people fall in line through self-regulation.

Next, those who don’t suppress their difference are officially marginalized. Diagnoses are made, justifying all sorts of corrective measures: institutionalization, sterilization, forced therapies. The population is cut in two again: there’s the healthy, conforming majority, and the unhealthy, deviant margin.

Because no third category is permitted, ambiguity cannot be tolerated. The binary is enforced at our earliest moments, as when “intersex individuals are surgically transformed as infants to ‘fit’ into a single gender category.”

Though it claims to be based in science, the pathologizing strategy bypasses the threshold question of whether the binary model is valid. The engine is circular. If you don’t fit your conventional gender category, then by definition, you’re disordered. And if the people who don’t conform are all disordered, then conformance must be natural and right.

Under this third path, when exceptions to the binary model emerge, it’s not evidence that the model is flawed. It’s evidence that the individual is flawed. Though different in specifics from the moralizing path, it serves the same function: preserving the integrity of the two-gender model.

My goal so far has been persuading you that gender nonconformity has long existed, and that’s because gender has long been used to compress human variation into overloaded social categories. Yet in today’s iteration of this ongoing tug-of-war, one aspect is unprecedented.

Society today is the collision of the three paths

Though methods for dealing with gender deviation have differed, past societies have more or less agreed within themselves on the method to use. Individual dissent must have been present, but there was broad agreement on the norms. This meant society didn’t get tripped up over questions of gender. It had a method for summarily dealing with them.

That’s not true of society today. In the present-day US, all three strategies co-exist, and they collide in law, society, and politics.

Debates over whether someone born as a “woman” can truly be a “man,” whether homosexual relationships should be ratified by the state, whether the unapologetic lifestyle of a gender nonconforming person is a danger to children, and so many others, can never be resolved so long as the debaters hold different views on what nonconformance means — whether it’s a natural and inevitable deviation from categories that are merely descriptive, whether it’s a sign of failure against a static moral code, or whether it’s a mark of individual disorder.

If you detach yourself from your instinctive path — expanding, moralizing, or pathologizing — and simply witness how these paths inevitably collide, you’ll be rightly pessimistic that our present debates are resolvable. So long as gender remains society’s central organizing category, and so long as we disagree over what nonconformity signifies and how it should be accommodated or dealt with, these debates will continue to intensify.

Final thoughts — for now

Nonconforming people have always existed. What has changed is how societies respond to this fact, and how much explanatory weight we ask gender to carry.

Today, we are trying to patch a model that was never equipped to explain who we are, why we do the things we do, and how we should be. We argue endlessly over the significance of gender and where to draw gender boundaries. It’s dialectical quicksand. The more we organize our thinking around gender, the more stuck we become.

My aim is to persuade you that our reliance on gender as an explanatory framework is the flaw upstream of the downstream debates.

At this point, a routine counterargument suggests itself.

Maybe the problem isn’t the use of gender itself; maybe the problem is modernity. We’ve overcomplicated these questions. If gender no longer works today as an organizing principle, perhaps it made more sense once — before modern excess, before abstraction, before everything became so overwrought.

That objection has intuitive appeal, and it’s the next thing that needs to be examined.

Looking further down the road, once that question is settled, a harder one follows: if gender was never the stable foundation we imagine, what kind of model would serve us better?

The next essay in this series examines whether gender divisions worked better in the past — and how nostalgia veils us from reality:

Before you go: If you liked what you read, please take a second to show it! Click like, comment, restack it (with a note!)—that breaks through the algorithm and helps people find my writing.

My discussion of gender and friendship owes itself to Tiffany Watt Smith’s Bad Friend which I cannot recommend enough. It situates in the Obsessive Investigation micro-genre I traced in my Electric Literature piece.

For more on gender, morality, and lust in Medieval times, I highly recommend The Once and Future Sex: Going Medieval on Women’s Roles in Society by Eleanor Janega.

Hat tip to aelle for introducing me to this phenomenon!

I really enjoyed this post, and I appreciate the amount of thought and research you put into it.

It's interesting that you say, "As humans, we’re drawn to models that put people into categories—the simpler, the better," because that, in itself, is cognitive shortcut: "All humans think like this."

I won't say that your claim isn't generally true as I've not studied every culture in the history of the world. I do see lots of people online making black and white assertions after jumping to wild conclusions, but I believe this is because algorithms distort our view of reality. We're all shown a slightly different version of it--one that's usually an echo-chamber that supports whatever we've already decided is true, making our claims and beliefs more and more rigid and and insulated. Is this human nature or is it merely another social construct? I feel like stubbornness, knee-jerk reactions, rigidity, close-mindedness, etc. are far more socially acceptable now than they used to be.

In real life, when people are thinking independently of algorithms, and when we're not living in fear (I think anxiety tends to exacerbate cognitive shortcuts) I believe a lot of us are willing to let complexity simply exist as it is.

Thanks for giving me more ammunition against the chauvinistic male thinkers who we’re still supposed to revere as fathers of western thought ❤️ Also love hearing about how gender diversity has historically been integrated in Polynesian cultures, showing us a map out of our current exclusionary binary model. Looking forward to the rest of the series!